audio and video broadcast monitors

Address

1280 San Luis Obispo Avenue, Hayward, CA 94544

- Products

-

-

-

-

- IP Capable Baseband Only

-

- IP Capable Baseband Only

-

- Multi-ScreenSingle Screen

-

- openGear

-

-

- Mavric

- Buy

- Support

- Contact Us

- Trade Shows & PR

- Blog

Live Video Streaming: A Monitoring Perspective

Broadcast video was traditionally transmitted in a linear format, meaning as per a predetermined schedule of transmission. Content distribution was achieved either via TV antenna (ATSC 3.0), DTH (direct-to-home) satellite or a cable connection via MSO’s (multi-system-operators).

This traditional model of transmission is now rapidly evolving to IP-based distribution via video streaming services. With the convergence between providers of live content like sports and news, and video-on-demand (VOD) content like movies and

TV shows, video streaming is at now at the core of broadcast technologies.

Revenue models in the broadcast industry have evolved in tandem, with the traditional advertisement revenues for linear TV transmission now being complemented by highly targeted

advertisement based upon personal preferences of individual streaming viewers. Many broadcasters now offer content to customers via subscriptions, via FAST (Free-Ad-Supported-TV), or some combination thereof.

The ubiquity of network infrastructure and a massive build-out in datacenters, coupled with IP streaming is at the center of this ability to distribute content across the globe.

On-demand vs Live - what are the latency implications?

Video on-demand (VOD) content distribution, meaning content like movies and TV shows don’t have the same transmission latency requirements, as live content like sporting events, where transmission delays, can cause serious customer dissatisfaction.

Video on-demand (VOD) content distribution, meaning content like movies and TV shows don’t have the same transmission latency requirements, as live content like sporting events, where transmission delays, can cause serious customer dissatisfaction.

For VOD content, video files (movies and TV shows) are split into small segments of a few seconds duration each, encoded, encrypted and stored on “origin servers”.

VOD content distribution therefore looks more like “file distribution”. HLS and DASH video distribution standards are examples.

When a customer wishes to view content, their playback device (Set-top-box, Smart TV, HDMI stick etc.) will contact an “edge server” nearest to them and request content. If the edge server does not already have the content cached, it reaches out to the origin server to request it, before receiving it from the origin server and sending it along to the viewer. here are obviously requirements to minimize latency, however, since video is available in the form of small pre-encoded segments, latency is governed by the time it takes to transfer these files to the viewers’ playback device, with encoding latency not playing any role. Further, since the viewer selected a particular item to view, the playback device can begin caching additional file segments for that selection, knowing that those will be needed next.

With live transmission, matters are a little different. In the case of live transmission, mitigation of transmission latency is of much greater importance. Further complicating matters is that by its very nature of being “live”, advance caching of content is constrained by the actual “time of the event”, plus any latency to encode and distribute a segment.

As a matter of fact, it is not unusual to transmit live video directly to the viewer in the form of an MPEG Transport Stream (TS). An MPEG TS is a direct transmission of video with no segmentation into files and is a workhorse technology in use for many years. MPEG TS is codec agnostic, meaning that it can support transport of MPEG2, H.264 as well as H.265 (HEVC) video along with AAC, M P3 as well Dolby Digital+ and other audio compression formats.

This technology has been used for transmission of video via satellite, cable and is also supported in the ATSC 3.0 TV standard. MPEG TS latency has two basic components, the encoding latency and the transmission latency. MPEG TS is a vital technology that is used for live events, and “backhaul” applications, where video signals may be sent over large distances from say a stadium to a remotely located production center. It is also ubiquitous in video distribution applications and is often the technology of choice for content aggregators and distributors.

What is MPEG SRT?

While MPEG TS has been around for years now, some relatively recent enhancements have been made by adoption of the Secure Reliable Transport (SRT) protocol. The SRT protocol was created by the SRT Alliance, to address some of the limitations of the earlier MPEG TS technology.

SRT incorporates the ability to transmit high quality video and audio with low latency over unreliable networks. It offers control over the amount of latency and mitigates issues like jitter due to packet loss over poor networks. SRT also makes it easy to traverse firewalls and is economical to deploy over existing network infrastructure. Additionally, SRT offers secure streaming with up to 256 bits AES encryption.

What is MPEG?

MPEG is an acronym for the “Motion Pictures Experts Group”, which is a standards setting body that has done an incredible job of producing a globally accepted standard for the compression of video and audio for transmission over long distances. Numerous individuals and companies have made significant contributions to this body and the associated standards. We all benefit from this immense body of work, in our ability to reliably and securely transmit and receive audio and video signals across the world.

Without getting too technical, at a high level, there are two parts that the standard specifies. One relates to the compression of audio and video, and the second to the transportation of compressed signals. The standard has been built to be extremely flexible, with the ability to transmit video and audio along with additional metadata as well as closed captions and subtitles, and if needed additional custom data via an ancillary data channel.

Transport Stream Hierarchy

An MPEG encoder constructs and streams a transport stream. A transport stream is a packetized stream of compressed audio, video and closed captions, along with additional metadata.

An MPEG encoder constructs and streams a transport stream. A transport stream is a packetized stream of compressed audio, video and closed captions, along with additional metadata.

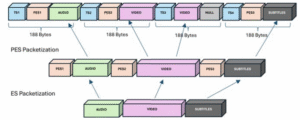

A TS is built hierarchically beginning with the output of the audio and video encoder section. First, encoded audio and video packets along with subtitles/closed captions are compiled into an Elementary Stream (ES). Packets are identified by their distinct Packet ID or PID. The ES is then packetized into a Packetized Elementary Stream (PES), with elementary stream PID’s to identify related audio, video and subtitles/CCs.

A single transport stream can contain a bouquet of “Programs”. Each Program has an associated set of Packetized Elementary Streams (PES’s), which typically include audio, video and subtitles/Closed Caption Elementary Streams (ES’s) for that Program.

Transport Stream Format

The Sync Byte (0x47) marks the beginning of each MPEG-2 transport stream (TS) packet, serving as a synchronization indicator for packet boundaries.

The PID (Packet Identifier) field within each TS packet identifies the type of payload contained in the packet. Different PID values correspond to different components of the transport stream described as follows:

The Program Association Table (PAT) lists all programs available in the stream along with the PIDs of their respective Program Map Tables (PMTs).

The Program Map Table (PMT) contains detailed information about the elementary streams associated with a specific program. It lists all elementary streams, such as audio and video streams, along with their corresponding PIDs.

The Network Information Table (NIT) provides network-related information and descriptors within the MPEG-2 transport stream. It includes details about the network structure, service providers, and other relevant information.

Elementary Streams within the MPEG-2 transport stream are identified by their PID’s and contain the actual audio, video, or other data transmitted over the network.

What are monitoring requirements for MPEG SRT?

Monitoring an MPEG SRT stream involves ensuring that all the elements described above are correct. As indicated earlier, an MPEG SRT stream is bouquet of “Programs”, each of which has its own audio, video and CC/subtitle tracks. Program audio and video are compressed, and the entire bouquet is transmitted as a composite stream.

From an operator’s perspective, monitoring imperatives don’t change, and they still need to have the ability to conveniently view video and audio meters, along with the ability to listen to each individual audio channel and inspect loudness for any audio track within a Program to ensure compliance with loudness standards.

Wohler's iVAM2-MPEG

Wohler recently launched their iVAM2-MPEG monitor, which is capable of decoding MPEG SRT transport streams with MPEG2, H.264 or H.265 HEVC, and bitrates as high as 35Mbps. The unit parses transport streams and presents operators with PAT/PMT/PID tables, enabling independent

selection of audio and video PID’s for decoding via a simple intuitive touch screen interface.

AAC, Dolby Digital + or MP3 audio formats are supported. This 2RU unit with a 2×4.3″ LCD interface accepts MPEG signals over IP (RJ45) or over an ASI input. The easy-to-operate touch screen interface is complemented by a built-in webserver, enabling operators to configure, upgrade and monitor audio meters remotely.

Fully integrated with MAVRIC, Wohler’s advanced remote monitoring software, the iVAM2-MPEG enables customers to tap into MPEG streams anywhere within their fabric and view the decoded MPEG video and audio content from any location in the world. Built in detection and alarming for SCTE tags, along with other error conditions like frozen video or audio silence help in quickly identifying problems.

Visit Wohler in Hall 10, Stand 10.B12 at IBC2025 in Amsterdam for more information. Free Registration Code: IBC1807