audio and video broadcast monitors

Address

1280 San Luis Obispo Avenue, Hayward, CA 94544

- Products

-

-

-

-

- IP Capable Baseband Only

-

- IP Capable Baseband Only

-

- Multi-ScreenSingle Screen

-

- openGear

-

-

- Mavric

- Buy

- Support

- Contact Us

- Trade Shows & PR

- Blog

Everything IP. Global Remote Monitoring and MPEG SRT - finally

With the advent of IP, the broadcast industry is seeing a large upgrade towards IP infrastructure such as ST2110, AES67, Dante or Ravenna. ST2110 is a key standard that has been brilliantly conceived to map SDI into IP transport as a starting point.

However, ST2110 goes much further and offers immense additional benefits and significant advantages over SDI.

Some of these advantages are

Bandwidth Conservation: Since each component of SDI – video, audio and metadata are transmitted as separate streams, receivers can choose to transmit or receive only the stream they are interested in, without needing to support the entire bandwidth (e.g. 12G). For example, a microphone can stream only audio, and a camera can stream only video. Conversely an audio mixing console can choose to receive only the audio stream, and a video scaler can choose to receive only the video stream.

Multi-signal Transmissions over single link: Depending upon the capacity of the “pipe” for example a 100Gbps network, multiple ST2110 streams can be transmitted over the same cable/fiber – unlike SDI which allows only a single signal. This leads to simplified cabling and a large reduction in required copper wiring.

Simplified Workflows: Since audio, video and closed captions are in their own separate streams, production workflows get enormously simplified – for example, switching audio in and out (e.g., stadium vs commentary) does not need re-embedding like SDI, and the required audio is simply streamed directly.

Reliability and Redundancy: IP networks can be easily peered for redundancy, creating resilient, fault tolerant and reliable networks (ST2022-7 – an earlier standard, along with ST2110)

Video Compression: ST2110 standards allow for compressed video in addition to audio (unlike SDI will allows compressed audio only), via video compression technologies like JPEG-XS.

The ST2110 Standard

The ST2110 standard is not a singular entity, but rather a collection of standards that enable a great deal of flexibility in accommodating various signals and formats with a highly precise microsecond resolution Precision Time Protocol (PTP) time-base. The beauty of the standard is that it does not invent any specific new protocols or standards. On the contrary, it provides a framework to utilize preexisting IEEE and IETF standards to achieve its goals. To ensure reliable delivery and retain low latencies, content transport is UDP based.

ST2110 - Sections of the standard specify each aspect of transport

Timing and sync (PTP: Precision Time Protocol) is specified in T2110-10.

Timing and sync (PTP: Precision Time Protocol) is specified in T2110-10.- The video portion of SDI is sent out as a packetized stream (UDP multicast) over IP networks specified in ST2110-20.

- Audio information which is in the HANC (Horizontal Ancillary) portion of the SDI signal is sent as a separate stream specified

in ST2110-30. - Closed Caption and other metadata in the VANC (Vertical Ancillary). portion of the SDI signal are sent out as another stream specified in ST2110-40.

- Compressed audio is specified in ST-2110-31 (AES3, Dolby Digital+).

- Compressed video is specified in ST2110-21 (JPEG-XS).

Compressed IP: For backhaul and distribution applications

The ST2110 standard is designed to carry uncompressed audio/video signals, as well as compressed audio/video. An example of compressed video is JPEG-XS. The key advantage of the JPEG-XS codec is the ability to offer a smooth trade-off between network bandwidth and video quality. Having a well-defined and smooth relationship between video quality and transmission bandwidth makes the JPEG-XS standard ideal for backhaul transmission links, where the need to maximize video quality for the available bandwidth is critical to maintaining high quality production.

Further the maturation of the SRT MPEG transport standard for transmission of compressed video is a valuable addition to the toolkit for video distribution applications. SRT (Secure Reliable Transmission) addresses numerous concerns over MPEG-TS transmission.

Some of those are

Security and Reliability

Security and Reliability

- End-to-End Encryption: SRT uses AES encryption to secure video streams

- Packet Loss Recovery: It employs techniques like Automatic Repeat reQuest (ARQ) to recover lost packets, ensuring smooth playback.

Performance

- Low Latency: SRT maintains low latency, typically around 120 milliseconds, making it suitable for live treaming.

- Adaptability: The protocol dynamically adjusts to changing network conditions, minimizing jitter and bandwidth fluctuations.

Compatibility

- Codec Agnostic: SRT can transport any video format, codec, resolution, or frame rate.

Multimode vs Single-mode ST2110

While ST2110 has been available for a while now, most early evaluation work was done with multimode fiber. The inherent limitation of multimode fiber is a maximum distance of 550 meters for 10Gbps links. This worked well in the initial test and qualification phase, where transport distances were relatively small. With the installation of “productionready” fiber infrastructure, it is not unusual to see significantly longer fiber transmission cables. As a result, a move to single-mode fiber is now seen as unavoidable.

New workflows for Contribution, Production and Distribution

The advent of these new technologies have enabled significant improvements to traditional broadcast workflows. One key factor is that the enabling of IP for transport of signals has opened the doorway to transport broadcast audio and video signals into the Cloud for further processing, with various operations performed on the signals in software. The Cloud itself offers major advantages, with the ability to migrate towards a pay-per-use model for processing, rather than a permanent CAPEX intensive equipment purchase model.

After processing signals in the Cloud, they can be brought back to physical broadcast centers, for additional processing or downstream distribution. Processing applications in the Cloud enable collaboration with access to remote talent and no longer being limited to in-person talent. Functions like Closed Caption, subtitling or alternate language tracks can now all be created in real-time by remotely located contributors.

One excellent example of a Cloud-based application for broadcast is Cloud-playout systems. In this instance, signals from various sources, including contribution feeds are brought into the Cloud. They are appropriately selected, spliced, recorded, reformatted as needed and finally conformed entirely in the Cloud. Distribution to online platforms like YouTube can be accomplished with a direct feed from these systems. Alternatively, conformed signals can be downlinked for further processing prior to transmission.

The Monitoring Challenge

While the transition to these new IP technologies has been extremely advantageous on one hand, not surprisingly, the adoption of these technologies create a huge challenge from a monitoring perspective. Signals are no longer available to monitor in a single physical location. They may flow across various pathways in physical locations, into the Cloud and flow across various pathways in the Cloud before potentially returning to physical locations. Further, signals might be uncompressed, compressed or potentially formatted in a whole host of IP or baseband signal formats.

The challenge is that operators still need to be able to view video and meters and listen to audio channels, irrespective of signal format, transport or location.

There is therefore a need for a unified and comprehensive approach to monitoring. At Wohler, we have specialized in broadcast signal monitoring for 41+ years and understand the scope of the challenge. Our close partnership with our customers as well as technology companies that have contributed towards these IP-based technologies, place us at a unique vantage point. On the one hand, we have a good understanding of the monitoring terrain and associated challenges, while on the other hand our partnership with various IP technology companies has enabled us to build monitoring products that match our customers’ expectations.

MAVRIC: Advanced Remote Monitoring

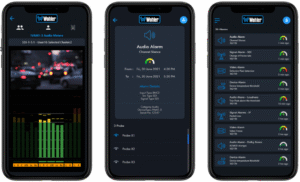

Our recently launched MAVRIC, an advanced remote monitoring software solution is a great example of such a tool. MAVRIC is an application that runs in the Cloud and communicates with audio and video monitoring probes. Probes are installed as optional expansion cards into Wohler’s in-rack monitors or available as openGear cards. Probes handle baseband as well as IP signals and transmit compressed audio and video into MAVRIC. MAVRIC provides operators with a web-based browser interface to view video, listen to audio and see audio meters as well as audio loudness for any selected channel coming from remote probes. MAVRIC is accompanied by a mobile app, which enables operators on the road to check in emotely. Built-in configurations can transmit alerts in the

event of deviations in video and audio parameters, enabling a monitor-by-exception paradigm. An included conferencing interface enabling operators

to quickly communicate, helps drive rapid diagnosis and resolution of problems as they arise.

MAVRIC also integrates with software probes that run entirely in the Cloud.

For broadcaster who cannot send media offnetwork, Wohler has the option to deploy media streaming servers on-premise.

MPEG SRT Monitoring: Introducing Wohler's new iVAM2-MPEG

Integrated with MAVRIC is Wohler’s latest iVAM2-MPEG in-rack monitor. Capable of decoding MPEG SRT MPEG2, H.264 or H.265 HEVC transport streams, with bitrates as high as 35Mbps. The unit parses transport streams and presents operators with PID tables, enabling independent selection of audio and video PID’s for decoding via a simple intuitive touch screen interface. AAC, Dolby Digital + or MP3 audio formats are supported. This 2RU unit with a 2×4.3″ LCD interface accepts MPEG signals over IP (RJ45) or over an ASI input. The easy-tooperate touch screen interface is complemented by a built-in webserver, enabling operators to configure, upgrade and monitor audio meters remotely.

Visit Wohler in Hall 10, Stand 10.B12 at IBC2025 in Amsterdam for more information. Free Registration Code: IBC1807